Apple’s new video patents: Who needs editors?

Following on from a patent for media collaboration for professionals, Apple have recently applied for a couple of video editing patents. Note that I’m not interested in whether such software features should be patentable, I’m interested in what these ideas could mean for future software.

Smart transitions

The first patent is about applications automatically selecting a transition between clips based on content, metadata or ‘sideband data’.

…based on the analysis and/or comparison of adjoining video clips, or adjoining portions of video clips, a transition type may be selected. The transition type may be selected based on rules defined for particular content characteristics, such as motion characteristics, temporal characteristics, or color characteristics, or a combination of content characteristics

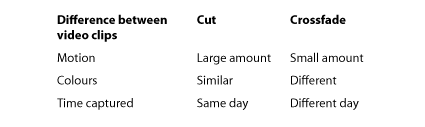

The patent includes some examples showing the choice between a hard cut and a crossfade:

For example, if it is determined that the content of adjoining video clips is temporally proximate (i.e., was captured on the same day), a hard-cut transition may be selected for transitioning between the adjoining video clips. If it is determined that the content of the video clips is temporally distant (i.e., was captured on different days), a crossfade transition may be selected for transitioning between the adjoining video clips. If it is determined that the content of the adjoining video clips contains a high amount of motion, a hard-cut may be selected for transitioning between the adjoining video clips. If it is determined that the content of the adjoining video clips contains a low amount of motion, a crossfade transition may be selected. Moreover, if it determined that the color characteristics of two adjoining video clips are similar, a hard-cut transition may be selected; if the color characteristics are different, a crossfade transition may be selected.

The patent mentions that the rules of which transitions to apply to which video clip combinations can be set using application preferences.

As well as selecting a transition between two clips, Apple suggests that sequences as a whole can be analysed to see if some transitions have been applied too often:

In some implementations, transitions types may be changed or adjusted based on an analysis of an entire video clip sequence, including video clips and transitions. For example, the video clip sequence may be analyzed to determine if a particular transition type is used too frequently (e.g., more than a specified number of times, or more than specified ratio of a particular type of transition to all transitions as a whole) and, if the particular transition type is used too frequently, the transitions in the video clip sequence may be changed to generate more variation in the transition types. Transition types in a video clip sequence may be adjusted or changed based on the overall color composition and/or the overall amount of motion in the video clip sequence, for example.

Secret extra element?

The trick with patents is to include a major part of the idea in an aside that many people might not notice. This stops competitors guessing future products…

…a transition type for transitioning between adjacent video clips may be automatically selected based [on] data associated with the adjacent video clips, data associated [with] other video clips in the video clip sequence, and/or other transitions in the video clip sequence.

The idea of choosing a transition based on data associated with other clips in the sequence makes me think of the possibility of ‘conditional editing.’ In future editors might want to be able to set attributes of a clip that affect other clips. Consider a clip showing a closeup of a large knife on a kitchen counter. It might be useful wherever that clip is positioned on the timeline that all other audio tracks are muted.

Automatic assembly?

In 2008 Intelligent Assistance launched First Cuts Studio for FCP, an application that helps generate of the first cut of your documentary. You log your clips with keywords and choose which work as opener footage, interview material and B-roll. You also set in and out points for all the clips you might want to use before the application produces a fast first cut. The site says the software is designed to ‘give you an edited sequence ready for an experienced editor to improve.’

This new Apple patent includes an implementation that would help the software automatically choose in and out points for footage. In the context of the patent the footage that is unlikely to be wanted in an edit is referred to as ‘a defective portion’ of a video clip:

For example, once the video is dropped into (or opened in) the video editing application, the video editing application may automatically identify defective portions of the video clip. A defective portion of a video clip may be identified by performing image analysis to detect rotation in the video image, blur in the video image, over-exposure of the video image, or under-exposure of the video image

Once these portions of video are detected…

The defect and removal process may include automatically selecting and inserting video transitions of an appropriate type between non-defective portions of the video clips such that the default transition (e.g., automatically selected transition) is a visually correct transition for transitioning between adjoining non-defective video clips.

So, hidden in a patent named ‘Smart Transitions’, Apple have also patented using image analysis to remove video footage from clips that probably won’t been needed in a film sequence.

Automatic zooming?

Associated with using image analysis to remove content and to determine transitions, Apple have also applied to patent a method for scaling up clips so audiences can focus on important objects and people in video clips: Smart Scaling and Cropping.

Given that most cinema projectors and TV resolution maxes out at 2048 by 1152, it is now common for films and TV shows to be shot at higher resolution so there are options when it comes to editing to zoom in on specific people and things in frame. This patent shows how this scaling up could be automated.

Determining when to cut

These two patents help with transitions, removing bad footage and scaling up badly framed shots, but it will take a while before image analysis will be able to work out when to cut from one shot to another. Perhaps it’s worth reminding ourselves of the six criteria that Walter Murch takes into account when choosing a splice point.

Luckily for most editors, it will be a long time before a computer can take on the hundreds of creative decisions that go into an edit. However, that won’t stop companies like Intelligent Assistance and Apple working towards helping others get good results quickly.

At least producers and directors will initially need editors to set up the rules by which automatic edits will be compiled…

Sounds like great technology to incorporate into iMovie ASAP. I would hope integration into FCP X would only be at the command of the editor.

Yeah, except FCP X already has the metadata and analysis capabilities that this technology builds upon.

Then, consider that FCP X is faster and easier to use than iMovie.

Of course, as you say, this would need to be at the command of the editor — as are the color and sound correction.

The audition capability of FCP X would be quite useful in examining the “automatic” vs the manual assembly of the edits.

It would be an interesting exercise if you could start with a story board and the technology could produce a “suggested” first cut based on the metadata and analysis of the clips.

I suspect that the job of assigning metadata (keywords, smart collections, favorites, rejects, etc.) would be key… and often performed by an assistant editor. Again, this is simple, fast and elegant in FCP X.

I look at technology like this as minimizing the drudgery and allowing the editor to focus his expertise on the important bits.

It would be interesting to see what Intelligent Assistance could do with this technology within FCP X.

Interesting Topic, thanx for bringing this to our attention

Some of the patents could be translated into features for making the process of editing with 2-3 people easier and possible without sending files around.

The automatic stuff sounds a lot like it could be incorporated into something for the noob-user. Would make sense in iMovie…

wouldn’t wanna see it in FCP X though

It would be good for external applications that edit Final Cut Pro X databases.

Professional editors and motion graphics designers could set up many rules that define transitions, bad footage and scale & crop rules. These parameters would help writers and producers work away without worrying about how to trim, ripple and roll. More as a brief for an experienced editor:

1. Check out an interview, mark some favourites, some dependencies (show clip 23 some time before clip 4)

2. Pick some B-roll favourites, including which B-roll goes with which point

3. Pick some faces and objects to highlight (by scaling up, vignetting, colour and focus)

The writer/producer could then press the magic button and see a rough edit. They could then go back to stages 1, 2 and 3 until they are happier with the result.

They can then pass the edit on to us.

That would be……magical:)

If it works as flawless as you described it.

Setting up working rule-parameters in mail or automator, took Apple some years to develop. There were always glitches in the first stages of these apps and features. The more specific the process you want to create, the more possible problems.

Rudimentary Presets were also missing completely.

Good point, I also would like to see thit in an external app.

As you described in 1.-3. would make total sense.

Keep up the good work. I really like your Topics!

Also your FCP X posts have helped me a lot in editing

as i originally came to film from photography. Also the industrial design background i had, has had some weird benefits in setting up concepts.

cheers from munich

Look like an editor is like a server a burger king. 10 bucks an hour.

There are 5 patents I read about that this system would be considered as an infringement. Very interesting.