…is dead?

Firewire

Leave a comment

…is dead?

…is dead?

In multi-touch news, Apple has just been granted a patent for a devices that use an interesting arrays of sensors:

The touch sensing device also includes a plurality of independent and spatially distinct mutual capacitive sensing nodes set up in a non two dimensional array.

At first reading the invention seems to be about varying the number of sources of capacitance compared with various numbers of sensors. I think the interesting bit is mention of a “non two dimensional array.” If two dimensions is out, there are few other options. Zero- and one-dimensional arrays are unlikely. If Apple planned to make arrays with a number of dimensions above three, they would need a few more patents to cover the technology.

So Apple patents a three dimensional touch interface device… That’s more interesting. As I’ve posted before, that means if the interface device is away from the display device (for reasons of ergonomics or scale – ‘Minority Report’-style), you will be able to get feedback of where your fingers are hovering above the device you are about to touch. Take a look at my post on a user-interface convention using this feature on current applications: ‘not quite direct manipulation.’

On the subject of what multi-touch interfaces will be manipulating in the future…

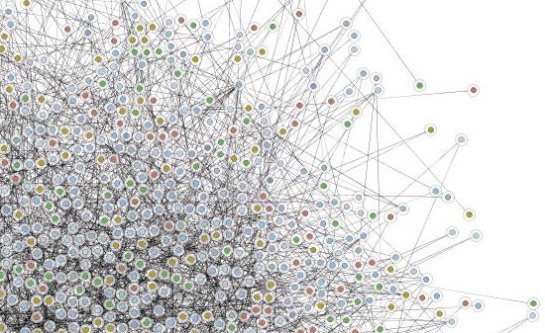

I have recently been working with a company that combines databases together to build ‘social networks’ – to model the way groups of people in society interact. This would be useful within organisations and projects too. If the connections within a project can be generated automatically, they’d be more useful…

I guess there’ll be some sort of three dimensional concept browser that will represent an individual’s model of their understanding and interaction with a project. Each member of the project would see a different view of the project. This is the sort of thing that will gain from direct (multi-touch-supported) manipulation.

…where is the user interface design for multi-touch systems?

My friend Jean sent me a link to a blog on the Microsoft Surface concept. Surface combines the power of multi-touch with table-based Space Invaders games of the early 80s. A couple of cameras monitor where the glass top is touched. That information is passed to a bit of software running on Windows.

Instead of talking about artists collaborating, how about thinking how the majority of people will benefit from multi-touch interaction. Most people read documents, write documents, calculate figures, look up information and make presentations. How will these activities be changed by multi-touch?

There’s lots of room for buttons on the iPod Touch home screen. How about:

Final Cut Server client: Stream the current version of an FCP project. Make selects and simple edits (a la iMovie 08) on an FCP project on a server somewhere using a gestural interface

AppleHome: stream my 100GB+ collection back home to me whenever I have Wi-Fi access

Sling-Pod: stream my TV tuner signal to me

…I mean second generation iPod Touch. The only reason I’m not buying one today is that the capacity is too low for me. Steve says that the best selling iPod is the Nano. The spec of the Nano shows that most people are happy with 8GB of storage for their music collections. They don’t need any more. That’s why the Touch only comes in 8 and 16GB.

My music libary is too large for my 60GB iPod, so I’ll bide my time until Apple release a Touch for people with large media collections…

…a version with a 160GB drive.

Earlier this month an Apple patented a multi-touch interface device for a portable computer. One illustration shows a camera above the screen that can detect people’s hands over a wide trackpad:

This means that until every screen that we have is touch sensitive, we’ll have touch devices that can recognise multiple fingers at the same time that will manipulate things on a seperate screen. They’ll have the same feature that the iPhone has – they’ll be able to detect fingers that haven’t quite touched yet. The advantage of that is we can choose where we touch before committing.

Following on from the previous post, fingers that hover could be shown as unfilled circles, while fingers that are touching would be filled transparent circles.

In this example, the editor has their left hand over the multi-touch device. The index finger is touching, so its red circle is filled. As we are in trim mode the current cursor for the index finger is the B-side roller because it is touching a roller. The other fingers are almost touching. They are shown with unfilled circles with faint cursors that are the correct based on where they are on the screen: the middle and ring fingers have the arrow cursor, if the little (pinky) finger touches, then it would be trimming the A-side roller.

Looks like it might be possible to come up with user interface extensions that let us use new interface devices with older software.

Here’s how a multi-touch interface might work when refining edits. In these screenshots, fingertips are shown as semi-transparent elipses. When a fingertip is detected above the surface but not touching, it is shown as a semi-transparent circle. I’m using FCP screenshots, but this could also work in this way in Avid.

Firstly, you could select edits by tapping them directly. If you want to select more than one edit, you could hold a finger on a selected edit and tap the other edits:

The edits selected:

With edits selected, you can then ripple and roll using two fingers. In the example below, the left finger stays still and the right finger (on ’14 and 13 skin’) moves left and right to ripple the right-hand side of the edits. The software could show which side of the edit is changing as you drag the clips to the right:

If you want to move the left-hand sides of the edits you’d move your left finger and hold the right finger still.

If you wanted roll the edit, you could use a single finger to move the edits left or right:

If you wanted slip a clip, you could select the edits on each end of the clip:

The way you use your two fingers defines whether you do a slip or a slide. Which ‘rollers’ get highlighted show which kind of edit you are performing. If you hold an adjacent clip with one finger and move the finger in the middle of the clip, you get a slip edit (the clips before and after would stay the same, the content within the clip will change):

If you only use one finger to move the middle of the clip, you get a slide (the content within the clip will stay the same, it will move backwards or forwards within the timeline, modifying the clips before and after):

It doesn’t take too much to create gestures for other edits…

Multi-touch controls are the new ‘in thing.’ Soon we’ll be interacting with our tools by touching screens in multiple places at the same time using our fingers. This means that operating systems and applications will be able to respond to gestural interfaces. On the iPhone moving two fingers in a pinching together motion makes the picture or map smaller on the screen. The opposite movement makes the map larger. On some computer-based multi-touch systems, the position of your fingers at the start and finish allows you to rotate as you scale up.

Here’s a demo from January 2006 showing what a multitouch gestural interface looks like.

How does this impact on the next user interface for editing? If it’s going to related to multi-touch controls what will that be like? Will we suffer from new forms of RSI? Will we take our hands off the keyboard to directly manipulate our pictures and sound?

The advantage of mice and graphic pen tablets is that we don’t have to use palettes as large as our screens to manipulate pointers. With mice we can move the mice away from the desk and move the mouse in the opposite direction through the air before bringing it down on the desk to keep the pointer moving. However many large screens you have, you never run out of space with a mouse.

With pen tablets, we give up this advantage in return for having control of pen pressure. We need more precise hand control because the effective resolution that a small movement of a pen on a tablet is much smaller when and A5 tablet needs to represent 2 or 3 thousand horizontal positions across a pair of monitors. Wacom tablets can detect pen movements down to an accuracy of 2000 dpi, but how many people have that kind of muscle control?

Imgine having a pair of displays that add up to 3840 pixels wide by 1200 pixels high. A common editor’s setup. Imagine if these screens were touch screens that could detect every touch your fingers made. What would working with a system like that be like?

Unless we change the way we work with software, our arms are going to be very tired by the end of the day…

One of the good ways to innovate is to jump to the next stage in technology and come up with new ideas there. I would say that Avid, Apple and Adobe’s current interfaces may be tapped out.

I’ve coming up with some possible gestures and interface tools for editors. Is anyone interested? It’s worth thinking about. We might as well help out Avid or Apple or whoever’s going to come up with the interface that might beat both of them…

Keyboards 1880-1984 Mouse 1984-2009 Multitouch 2009-?